ElectricAccelerator 7.0 was officially released a couple weeks ago now, on April 12, 2013. This version, our 26th feature release in 11 years, incorporates performance features that are truly nothing less than revolutionary: dependency optimization and parse avoidance. To my knowledge, no other build tool in the world has comparable functionality, is working on comparable functionality or is even capable of adding such functionality. Together these features have enabled us to dramatically cut Android 4.1.1 (Jelly Bean) build times, compared to Accelerator 6.2:

- Full, from-scratch builds are 35% faster

- “No touch” incremental builds are an astonishing 89% faster

In fact, even on this highly optimized, parallel-friendly build, Accelerator 7.0 is faster than GNU make, on the same number of cores. On a 48-core system gmake -j 48 builds Android 4.1.1 in 15 minutes. Accelerator 7.0 on the same system? 12 minutes, 21 seconds: 17.5% faster.

Read on for more information about the key new features in ElectricAccelerator 7.0.

Dependency optimization: use only what you need

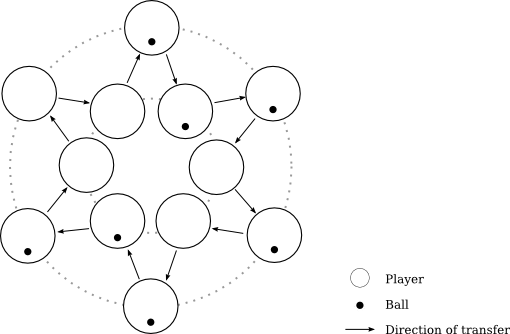

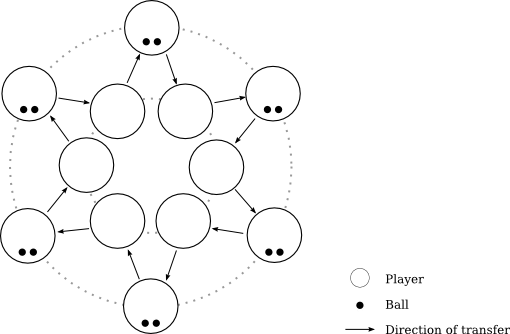

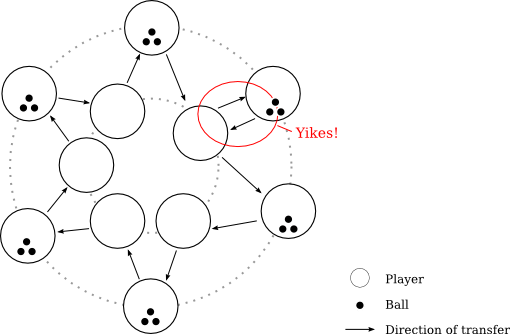

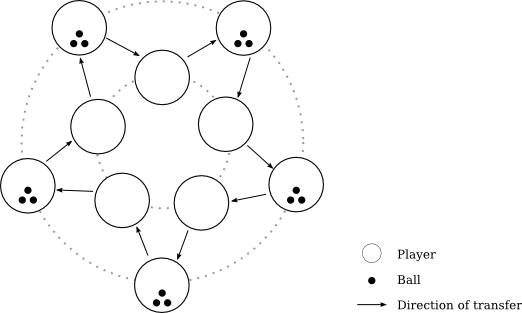

Dependency optimization is a new application of the data that is used to power Accelerator’s conflict detection and correction features. But where conflict detection is all about finding missing dependencies in makefiles, dependency optimization is focused on finding surplus dependencies, which drag down build performance by needlessly limiting parallelism. Here’s a simple example:

|

1

2

3

4

5

|

foo: bar @echo abc > foo && sleep 10 bar: @echo def > bar && sleep 10 |

In this makefile you can easily see that the dependency between foo and bar is superfluous. Unfortunately GNU make is shackled by the dependencies specified in the makefile and is thus obliged to run the two jobs serially. In contrast, with dependency optimization enabled emake can detect this inefficiency and ignore the unnecessary dependency — so foo and bar will run in parallel.

Obviously you could trivially fix this simple makefile, but in real-world builds that may be difficult or impossible to do manually. For example, in the Android 4.1.1 build, there are about 2 million explicitly specified dependencies in the makefiles. For a typical variant build, only about 300 thousand are really required: over 85% of the dependencies are unnecessary. And that's in the Android build, which is regarded by some as a paragon of parallel-build cleanliness — imagine the opportunities for improvement in builds that don't have Google's resources to devote to the problem.

To enable dependency optimization in your builds, add --emake-optimize-deps=1 to your emake command-line. The first build with that option enabled will "learn" the characteristics of the build; the second and subsequent builds will use that information to improve performance.

Parse avoidance: the fastest job is the one you don't have to do

A common complaint with large build systems is incremental build performance — specifically, the long lag between the time that the user invokes make and the time that make starts the first compile. Some have even gone so far as to invent entirely new build tools with a specific focus on this problem. Parse avoidance delivers similar performance gains without requiring the painful (perhaps impossible!) conversion to a new build tool. For example, a "no touch" incremental build of Android 4.1.1 takes close to 5 minutes with Accelerator 6.2, but only about 30 seconds with Accelerator 7.0.

On complex builds, a large portion of the lag comes from parsing makefiles. The net result of that effort is a dependency graph annotated with targets and the commands needed to generate them. The core idea underpinning parse avoidance is the realization that we need not redo that work on every build. Most of the time, the dependency graph, et al, is unchanged from one build to the next. Why not cache the result of the parse and reuse it in the next build? So that's what we did.

To enable parse avoidance in your builds, add --emake-parse-avoidance=1 to your emake command-line. The first build with that option will generate a parse result to add to the cache; the second and subsequent builds will reload the cached result in lieu of reparsing the makefiles from scratch.

Other goodies

In addition to the marquee features, Accelerator 7.0 includes dozens of other improvements. Here are some of the highlights:

- Limited GNU make 3.82 support. emake now allows assignment modifiers (like ?=, etc.) on define-style variable definitions, when --emake-emulation=gmake3.82

- Order-only prerequisites in NMAKE emulation mode. GNU make introduced the concept of order-only prerequisites in 3.80. With this release we've extended our NMAKE emulation with the same concept.

- Enhancements to electrify. The biggest improvement is the ability to match full command-lines to decide whether or not a particular command should be executed remotely (Linux only). Previously, electrify could only match against the process name.

What's next?

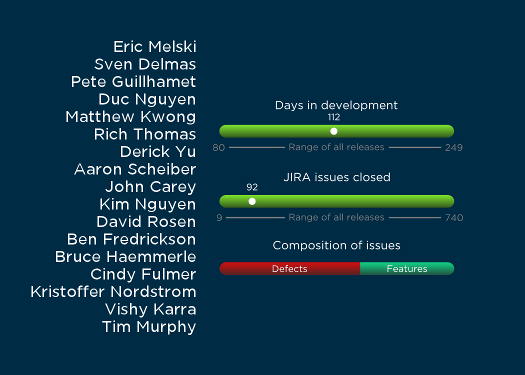

In my opinion, Accelerator 7.0 is the most exciting release we've put out in close to two years, with truly ground-breaking new functionality and performance improvements. It's not often that you can legitimately claim double-digit percentage performance improvements in a mature product. I'm incredibly proud of my team for this accomplishment.

With that said: there's always room to do more. We're already gearing up for the next release. The exact release content is not yet nailed down, but on the short list of candidates is a new job scheduler, to enable still better performance; "buddy cluster" facilities, to allow the use of Accelerator without requiring dedicated hardware; and possibly some form of acceleration for Maven-based builds. Let's go!