In a previous article I covered the basic conflict detection algorithm in ElectricMake. It’s surprisingly simple, which is one of its strengths. But if ElectricMake strictly adhered to the simple definition of a conflict, many builds would be needlessly serialized, sapping performance. Over the years we’ve made a variety of tweaks to the core algorithm, adding support for special cases to improve performance. Here are some of those special cases.

Non-existence conflicts

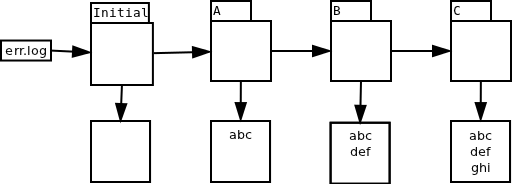

One obvious enhancement is to ignore conflicts when the two versions are technically different, but effectively the same. The simplest example is when there are two versions of a file which both indicate non-existence, such as the initial version and the version created by job C in this chain for file foo:

Suppose that job D, which falls between C and E in serial order, runs before any other jobs finish. At runtime, D sees the initial version, but strictly speaking, if it had run in serial order it would have seen the version created by job C. But the two versions are functionally identical — both indicate that the file does not exist. From the perspective of the commands run in job D, there is no detectable difference in behavior regardless of which of these two versions was used. Therefore emake can safely ignore this conflict.

Directory creation conflicts

A common make idiom is mkdir -p $(dir $@) — that is, create the directory that will contain the output file, if it doesn’t already exist. This idiom is often used like so:

$(OUTDIR)/foo.o: foo.cpp @mkdir -p $(dir $@) @g++ -o $@ $^

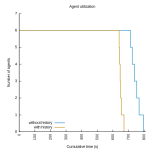

Suppose that the directory does not exist when the build starts, and several jobs that employ this idiom start at the same time. At runtime they will each see the same filesystem state — namely, that the output directory does not exist. Each job will therefore create the directory. But in reality, had these jobs run serially, only the first job would have created the directory; the others would have seen the version created by the first job, and done nothing with the directory themselves. According to the simple definition of a conflict, all but the first (serial order) job would be considered in conflict. For builds without a history file expressing the dependency between the later jobs and the first, the performance impact would be disastrous.

Prior to Accelerator 5.4, there were two options for avoiding this performance hit: use a good history file, or arrange for the directories to be created before the build runs. Accelerator 5.4 introduced a refinement to the conflict detection algorithm which enables emake to suppress the conflict between jobs that both attempt to create the same directory, so even builds with no history file will not get conflicts in this scenario, without sacrificing correctness. (NB: you need not take special action to enjoy the benefits of this improvement).

Appending to files

Another surprisingly common idiom is to append error messages to a log file as the build proceeds:

$(OUTDIR)/foo.o: foo.cpp @g++ -o $@ $^ 2>> err.log

Implicitly, each append operation is dependent on the previous appends to the file — after all, how will you know which offset the new content should be written to if you don’t know how big the file was to begin with? In terms of file versions, you can imagine a naive implementation treating each append to the file as creating a complete new version of the file:

The problem of course is that you’ll get conflicts if you try to run all of these jobs in parallel. Suppose all three jobs, A, B and C start at the same time. They will each see the initial version, an empty file, but if run serially, only A would have seen that version. B would have seen the version created by A; C would have seen the version created by B.

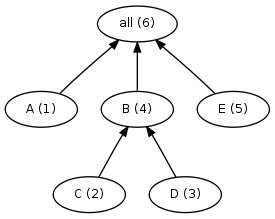

This example is particularly interesting because emake cannot sort this out on its own: as long as the usage reported for err.log is the very generic “this file was modified, here’s the new content” message normally used for changes to the content of an existing file, emake has no choice but to declare conflicts and serialize these jobs. Fortunately, emake is not limited to that simple usage record. The EFS can detect that each modification is strictly appending to the file, with no regard to the prior contents, and include that detail in the usage report. Thus informed, emake can record fragments of the file, rather than the entire file content:

Since emake now knows that the jobs are not dependent on the prior content of the file, it need not declare conflicts between the jobs, even if they run in parallel. As emake commits the modifications from each job, it stitches the fragments together into a single file, with each fragment in the correct order relative to the other pieces.

Directory read conflicts

Directory read operations are interesting from the perspective of conflict detection. Consider: what does it mean to read a directory? The directory has no content of its own, not in the way that a file does. Instead, the “content” of a directory is the list of files in that directory. To check for conflicts on a directory read, emake must check whether the list of files that the reader job actually saw matches the list that it would have seen had it run in serial order — in essence, doing a simple conflict check on each of the files in the directory.

That’s conceptually easy to do, but the implications of doing so are significant: it means that emake will declare a conflict on the directory read anytime any other job creates or deletes any file in that directory. Compare that to reads on ordinary files: you only get a conflict if the read happens before a write operation on the same file. With directories, you can get a conflict for modifications to other files entirely.

This is particularly dangerous because many tools actually perform directory reads under-the-covers, and often those tools are not actually concerned with the complete directory contents. For example, a job that enumerates files matching *.obj in a directory is only interested in files ending with .obj. The creation of a file named foo.a in that directory should not affect the job at all.

Another nasty example comes from utilities that implement their own version of the getcwd() system call. If you’re going to roll your own version, the algorithm looks something like this:

- Let cwd = “”

- Let current = “.”

- Let parent = “./..”

- stat current to get its inode number.

- read parent until an entry matching that inode number is found.

- add the name from that entry to cwd

- Set current = parent.

- Set parent = parent + “/..”

- Repeat starting with step 4.

By following this algorithm the program can construct an absolute path for the current working directory. The problem is that the program has a read operation on every directory between the current directory and the root of the filesystem. If emake strictly adhered to conflict checking on directory reads, a job that used such a tool would be serialized against every job that created or deleted any file in any of those directories.

For this reason, emake deliberately ignores conflicts on directory read operations by default. Most of the time this is safe to do, surprisingly — often tools do not need a completely accurate list of the files in the directory. And in every case I’ve seen, even if the tool does require a perfectly correct list, the tool follows the directory read with reads of the files it finds. That means that you can ensure correct behavior by running the build one time with a single agent, to ensure the directory contents are correct when the job runs. That run will produce history based on the file reads, so subsequent builds can run with many agents and still produce correct results.

Starting with Accelerator 6.0, you can also use –emake-readdir-conflicts=1 to force emake to honor directory read conflicts.

Conclusion

Getting parallel builds that are fast is easy: just add -j to your make invocation. Getting parallel builds that are both fast and reliable is another story altogether. As you’ve seen, the core conflict detection algorithm in ElectricMake is simple, but after many years and hundreds of thousands of builds, we’ve enhanced that simple algorithm in a variety of special cases to provide even better performance. Future releases of ElectricAccelerator will include even more refinements to the algorithm.

Follow @emelski